The Moment a Photograph Starts Breathing

You know that feeling when a tool does something you didn’t think was possible — not in a sci-fi way, but in a quiet, practical way that changes how you think about your own work? That happened to me three months ago. I uploaded a portrait to an AI platform, pressed a button, and watched a still image begin to move. Not a glitchy approximation. Actual, coherent motion. I sat there for a full minute, replaying the clip.

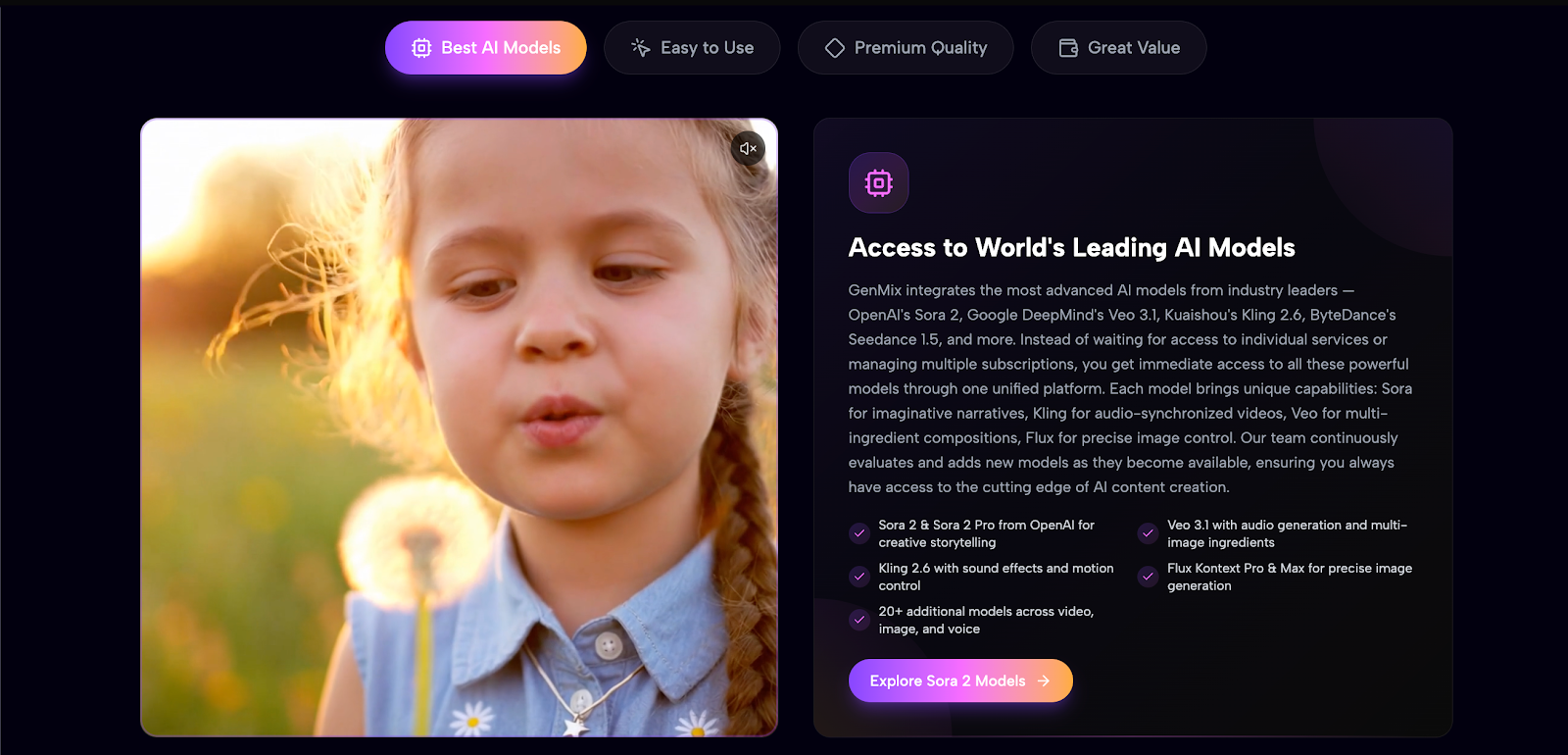

Not because it was perfect. Because it was possible. I’ve spent the better part of six years creating content. Photography, brand campaigns, social media strategy, influencer partnerships. And for most of that time, the gap between what I could imagine and what I could actually produce came down to one thing: motion. Static images were my comfort zone. Video was someone else’s territory — someone with a camera crew, editing software, and a budget I didn’t have. That changed when I started experimenting with GenMix AI.

The Space Between Having an Idea and Seeing It Exist

Most creative tools solve technical problems. They give you more control over colour grading, more options for transitions, more precision in your edits. AI motion tools solve something more fundamental: they collapse the distance between having an idea and seeing it exist in the world. You upload a photo. You choose a style or template. Ninety seconds later, the image breathes. It sways. It dances. Not perfectly — but with enough coherence that your brain stops seeing a trick and starts seeing a possibility.

That shift is everything. Because once you stop asking “can I make this?” and start asking “what should I make?” — the entire creative equation changes. I didn’t arrive at this conclusion through theory. I arrived at it through dozens of late-night experiments, failed generations, and the occasional result that made me say out loud, to no one, “Wait — that actually works.”

Testing the Boundaries: Dance Effects

The real test for any motion AI is dance. Coordinated body movement, shifting weight, fabric responding to momentum, hair catching light at different angles — it’s where every shortcut becomes visible and every weakness gets exposed.

I ran a full-body portrait through the ai twerk generator expecting the usual: distorted limbs, a face that drifts between frames, backgrounds that melt like wet watercolour.

What I got was surprisingly watchable.

The proportions held throughout the clip. The movement had actual rhythm — not random oscillation, but something that felt intentionally choreographed. The clothing responded to motion in a way that was physically plausible. The background stayed stable, which alone put it ahead of everything I’d tried before.

Was it choreography I’d want in a professional music video? No. Was it engaging enough for Instagram Reels and TikTok? Without question.

That distinction matters more than most people realise. The majority of creators don’t need cinema-grade output. They need content — consistent, eye-catching, shareable content that stops the scroll. And for that purpose, the current generation of AI motion tools has crossed the threshold from novelty to genuinely useful.

What Actually Changed Between 2024 and Now

The honest answer is: everything underneath the surface.

Two years ago, AI-generated motion was a parlour trick. Faces melted between frames. Limbs stretched into anatomically impossible configurations. Backgrounds dissolved into abstract nightmares. The technology existed, but it wasn’t usable — not for anything you’d actually want to share.

The current generation — models like Sora 2, Veo 3.1, Kling 3.0 — represents a fundamental architectural shift. These aren’t better versions of the old approach. They’re built on entirely different foundations. They don’t just animate pixels sequentially. They understand spatial relationships, body structure, lighting continuity, even the physics of fabric and hair.

| Capability | Early AI Motion (2024) | Current Generation (2026) |

| Body coherence | Distortion after 2-3 seconds | Stable proportions through entire clip |

| Facial identity | Features drift noticeably between frames | Recognisable and consistent throughout |

| Background stability | Warping, melting, colour bleeding | Clean, consistent, no artifacts |

| Clothing physics | Static or randomly deforming | Responds naturally to body movement |

| Generation speed | 5-10 minutes per attempt | 60-90 seconds |

| Creative options | A handful of generic presets | 100+ curated effect templates |

| Multi-model access | One model per platform | Multiple models, switch per project |

The difference isn’t incremental. It’s categorical. And it happened faster than most creators noticed — which means there’s still a window where early adopters have a genuine advantage.

From Motion to Identity: When Style Becomes Brand

Video effects opened one door. Image style transfer opened another entirely. Brand consistency has been my quiet obsession for years. Every platform, every post, every story needed visuals that felt like they belonged together — same aesthetic, same energy, same personality. Stock photos couldn’t deliver that. Different photographers, different lighting philosophies, different colour palettes. Custom illustration was beautiful but prohibitively expensive for the volume of content social media demands.

Then I tried the south park character creator.

The AI didn’t paste a filter over my face. It reconstructed me — my jawline, my eye spacing, my hairline, even the way I tilt my head — within the visual conventions of the chosen art style. The result was an avatar that was unmistakably me, rendered in a consistent aesthetic I could deploy across every single platform.

I used these stylised portraits for my Twitter profile, my newsletter header, my LinkedIn banner, and my Instagram highlights. For the first time, my content had a visual identity that followers could recognise at a glance. Not because I hired a branding agency. Because an AI understood both my face and an art style well enough to bridge them convincingly.

That’s not a gimmick. That’s a genuine creative capability that didn’t exist eighteen months ago.

The Workflow That Emerged

I didn’t plan a workflow. One emerged naturally over several weeks of experimentation:

- Monday mornings: 30-40 minutes generating the week’s visual assets. Upload photos from the weekend, select effect templates, curate the best outputs. This produces 8-12 pieces of content.

- Tuesdays and Wednesdays: Post-processing in CapCut for video overlays, Canva for image branding and text. The AI handles the creative generation. I handle context, captions, and scheduling.

- Thursdays: Schedule everything across platforms — TikTok, Instagram, Twitter, LinkedIn. Each piece gets platform-specific cropping and caption treatment.

- Fridays: Review engagement metrics. Which effects resonated? Which fell flat? Which platforms responded best to motion versus stylised stills? Adjust next week’s template selection accordingly.

Total weekly investment in visual content creation: under two hours. What used to consume an entire working day — searching stock libraries, editing clips frame by frame, wrestling with export settings — now happens before lunch on Monday.

The freed-up time goes into what actually grows an audience: writing better captions, engaging with comments, building relationships with other creators, planning collaborations. The production bottleneck is gone.

What I Learned About Getting Good Results

The AI is only half the equation. Your source material determines most of the output quality, and learning what makes a good input is its own skill.

- Full body visibility matters enormously. The model needs complete anatomical context to generate convincing movement. Cropped-at-the-waist portraits produce awkward, truncated animations.

- Even, natural lighting produces the cleanest results. Harsh directional shadows create flickering artifacts in the output as the AI struggles to maintain consistent illumination across frames.

- Simple backgrounds hold their structure far better. Busy environments with lots of detail produce edge distortion and visual noise that distracts from the subject.

- Unfiltered, original photos work best. Heavy Instagram presets — especially those that dramatically shift colour temperature or add grain — confuse the spatial analysis and degrade output quality.

- One person per frame. Group photos produce unpredictable results because the model doesn’t reliably identify which subject to animate.

These aren’t limitations to work around. They’re design principles to internalise. Once I started shooting specifically for AI processing — clean backgrounds, even lighting, full body, no filters — my success rate jumped from maybe one in five to four in five.

The Honest Constraints

No tool is everything, and pretending otherwise serves no one. It’s worth being transparent about what this technology cannot do yet:

- Video clips cap at 5-15 seconds. These are social media teasers and attention hooks, not long-form content.

- Results vary between generations. You’ll get similar outputs from the same input, but never identical ones. This means you sometimes need two or three attempts to get the best version.

- Complex or unusual poses don’t always translate cleanly. The AI favours natural, balanced positions and can struggle with extreme angles or unconventional body language.

- You choose the style, not the choreography. Motion patterns come from training data, not from your specific creative direction. You influence the mood, not the movement.

These constraints define the tool’s role with useful clarity: not a replacement for professional video production, but a creative amplifier for anyone who needs visual content at volume and speed.

Who This Actually Changes Things For

Clear fit:

- Solo creators publishing across multiple platforms simultaneously who can’t afford a video editor

- Small marketing teams producing content for clients without dedicated video production budgets

- Influencers who know motion content performs better but lack the technical skills or equipment

- Anyone who’s been avoiding video entirely because the learning curve and overhead felt insurmountable

Worth waiting:

- Long-form video producers (YouTube essays, documentaries, course content)

- Brands requiring frame-perfect consistency across every single asset in a campaign

- Projects that demand specific choreography, precise timing, or biomechanical realism

Where the Effort Lives Now

The real change isn’t technical. It’s psychological.

Less time goes into mechanics. More time goes into meaning.

You stop wrestling with export settings and start thinking about what story you’re trying to tell. You stop searching stock libraries for the least-bad option and start creating exactly what you imagined. When creative friction disappears, curiosity expands. And when curiosity expands, the work gets more interesting — not because the tools are perfect, but because you’re finally free to focus on the part that actually matters.

That portrait I animated three months ago? It took ninety seconds to generate. The campaign it became part of ran for six weeks across four platforms and outperformed every static post I’d published that quarter. Not because AI is magic. Because motion is attention. And in this economy, attention is everything.

Disclaimer

The views and experiences shared in this article are based on the author’s personal experimentation and opinions regarding AI-powered creative tools. The tools, platforms, and technologies mentioned—including but not limited to GenMix AI and other AI motion or image-generation models—are referenced for informational and illustrative purposes only.

Results generated by AI platforms may vary depending on input quality, platform updates, algorithm changes, and user expertise. This article does not constitute professional, technical, or commercial advice, nor does it guarantee similar outcomes for other users.