ToImage AI Review For Real Creative Work

Most AI image tools look impressive when viewed as demos. The real question is what happens after the first nice result. Can the workflow support repeated use? Can a marketer adapt one approved asset into several campaign directions? Can a creator keep the same visual idea but test different tones without rebuilding everything from zero? That is the lens I used when looking at Image to Image. Instead of asking whether it can generate something beautiful once, I looked at whether it feels usable for real creative work.

That distinction matters because many image tools are strongest at surprise and weakest at repeatability. In my observation, the products that remain useful are the ones that lower friction after the first generation. They help you keep momentum, compare options, and continue refining without turning every new request into a separate project. From that perspective, ToImage AI is more interesting as a workflow tool than as a simple novelty engine.

What The Product Is Actually Trying To Solve

Publicly, the platform does not frame itself as one fixed model with one style. It presents itself as a place where users can generate images, transform images, and even move toward video creation through multiple model options. That broader structure affects how the platform should be judged.

This Is More About Transformation Than Spectacle

In practical use, many people already have a source image that is good enough to matter. The challenge is not imagining a new universe from scratch. It is taking something almost usable and making it better aligned with a goal. That may mean a cleaner product visual, a more cinematic portrait, a stronger editorial tone, or a more consistent brand feel.

The Starting Image Carries More Value Than People Think

A source image already contains framing, subject emphasis, composition logic, and a baseline emotional tone. That is why image-to-image workflows can feel more efficient than pure text generation. They are not replacing the image. They are redirecting it.

How The Public Workflow Feels In Practice

The public flow is simple: start with a prompt or upload an image, describe the change, choose a model, generate the output, then iterate from there. That sounds basic, but it is important because a lot of usability comes from how quickly a user can move through those decisions.

Step 1. Start From Something Real

The workflow begins with either text or an uploaded image. For evaluation purposes, the more interesting path is image-to-image, because that is where the platform seems to distinguish itself. Starting from a real source image makes the process feel grounded and purposeful.

Step 2. Describe What Should Evolve

The second step is prompt-based direction. Publicly, the platform supports transformation through written instructions, whether the change involves style transfer, enhancement, background change, realism shifts, or broader reimagining. In my view, that is the right level of control for a wide audience. It is simple enough to learn but flexible enough to matter.

Step 3. Match The Model To The Task

This is one of the more serious aspects of the platform. Instead of hiding the model layer, ToImage AI exposes it. That means the user is not locked into one output philosophy. Some models appear better suited to hyper-realistic image transformation, some to speed, some to precision editing, and some to image-to-video workflows.

Step 4. Compare Before You Commit

A strong image workflow should make comparison easy. Publicly, the platform supports multi-output generation in some paths and encourages iterative refinement. That is valuable because the best result is often not obvious in advance. In testing this category more broadly, comparison is where real decision-making happens.

What Feels Strongest About The Product

The platform’s most convincing strength is not any single model name. It is the structure created by having several routes for different types of visual work.

It Respects Different Kinds Of Creative Needs

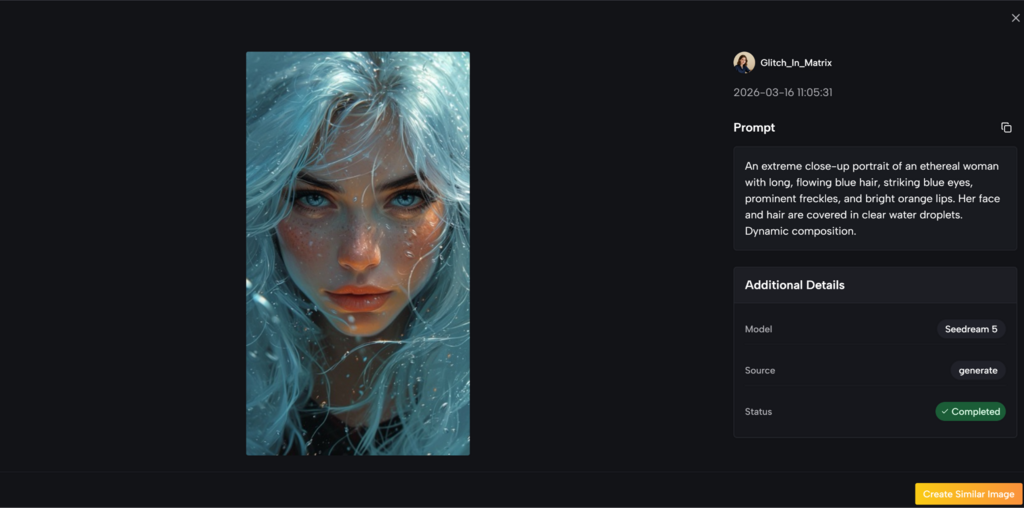

Publicly, the image side highlights model options such as Nano Banana, Nano Banana 2, Seedream, Flux, GPT-4o, and others. That matters because not every user wants the same thing. Some want realism. Some want speed. Some want more precise editing. That separation makes the product feel more practical.

Reference-Led Work Appears To Be A Priority

One of the more useful public claims is support for multiple reference images in Nano Banana. Up to four references are presented for style consistency and character continuity. This is a strong signal because consistency is one of the hardest things to maintain in AI visual workflows. A platform that acknowledges that problem feels closer to real use than one that only highlights isolated hero results.

The Bridge To Video Adds Long-Term Value

The product also connects still-image work to video-oriented models such as Veo 3, Veo 3.1, Kling, Wan, Runway, and Seedance. Even if a user begins with image transformation only, that shared environment creates a smoother path toward motion work later.

How The Model Layer Changes The Review

A good review should not treat all models as interchangeable. The platform itself does not. Publicly, each route seems to carry a different promise.

Nano Banana Looks Best For Controlled Realism

Nano Banana is framed around hyper-realistic image-to-image transformation and guided image change. If that public framing reflects regular use, it makes sense as the model for portraits, product shots, and other tasks where source integrity still matters.

Nano Banana 2 Feels More Production-Oriented

Nano Banana 2 appears to add higher resolution options and multiple outputs per request. That is a practical improvement for people comparing polished variants rather than searching for one lucky result.

Seedream Helps When Speed Matters More Than Perfection

Speed is not a secondary benefit. In many creative environments, speed is part of quality because it changes how many options a person can reasonably test. Seedream appears positioned for that kind of use.

Flux Suggests Better Fine-Grained Editing Control

Flux stands out because it is publicly described in terms of context-aware changes and object-level precision. That makes it feel more like an editing route than a broad style engine.

This Helps Serious Users Narrow The Tool Faster

One of the biggest weaknesses in AI products is forcing users to discover every strength through trial alone. ToImage AI at least gives a more legible map. Even when the user still needs experimentation, the platform appears to provide a clearer starting logic.

Where The Experience Looks Most Useful

The best use cases are not abstract or purely artistic. They are operational.

Campaign Asset Expansion

A team may begin with one approved product image and need new treatments for social, paid ads, and page design. That is a natural fit for a transformation-led workflow because the source image already holds brand approval.

Portrait And Character Refinement

A creator can keep the same subject while testing different visual moods. Instead of changing the whole concept, the workflow lets the user explore atmosphere, style, and finish.

Draft Concept To Publishable Asset

A rough concept is often good enough to explain an idea but not good enough to publish. Tools like this matter because they shorten the distance between concept and usable asset.

A Simple Product Review Table

| Review Area | What Stands Out | Practical Effect |

| Workflow clarity | Upload, prompt, choose model, generate | Easy to understand without a steep learning curve |

| Model variety | Different paths for realism, speed, precision, and video | Better fit for different creative tasks |

| Reference support | Multiple references in some image paths | Stronger consistency across outputs |

| Iteration logic | Compare and refine instead of relying on one result | More useful for real production work |

| Expansion potential | Image and video models in one environment | Easier to extend still assets into motion later |

What Still Feels Limited Or Demands Care

A measured review should also say where friction remains.

Prompt Quality Still Shapes The Ceiling

The platform can streamline the process, but it cannot invent clarity on behalf of the user. Vague prompts will still lead to vague outcomes. In my observation, the strongest results usually come when the user defines both the preserved elements and the desired changes.

Iteration Is Still Necessary

This is not a one-click certainty tool. Some outputs will look interesting but not useful. Others will preserve the wrong parts of the source image. That is normal. The strength of the platform is not that it removes iteration, but that it seems to make iteration more manageable.

Model Choice Can Be A Small Learning Curve

A multi-model platform is more flexible, but it also asks more from the user. People may need several sessions before they build intuition about which model best suits realism, speed, or more surgical edits.

My Overall Read On The Product

As a review, the platform feels stronger when judged by workflow quality than by headline hype. It appears to understand that most users do not simply want more images. They want better transitions from draft to asset, from source to variation, and from visual idea to usable output. That is a more durable value proposition than raw spectacle.

ToImage AI seems most compelling for people who already have visual material worth extending. It respects the role of the source image, gives users multiple model paths, and turns iteration into a more normal part of the process. That does not guarantee perfect results every time. But it does make the platform feel closer to real creative work, which is often a better sign than any single stunning example.

Disclaimer

The information provided in this article, “ToImage AI Review For Real Creative Work,” is intended for general informational and educational purposes only. The views and opinions expressed are based on independent observations and do not constitute professional, technical, or commercial advice.

This review is not affiliated with, sponsored by, or endorsed by ToImage AI or any of the platforms, tools, or models mentioned. Features, capabilities, and performance described in the article are based on publicly available information and general user experience, which may change over time as the platform evolves.